Must take permission before launching AI models, safeguard electoral process: Centre to Big Tech

Share :

The Centre on Saturday came down hard on big internet and social media platforms on the misuse of AI, saying all intermediaries must not permit any bias or discrimination or threaten the integrity of the electoral process, and take the government's permission before launching any AI model in the country.

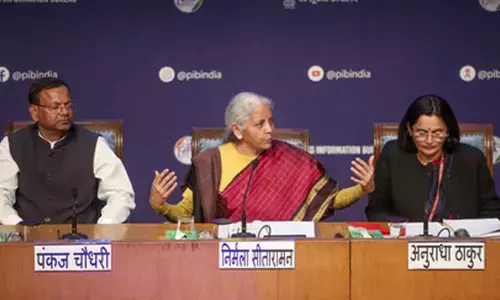

New Delhi: The Centre on Saturday came down hard on big internet and social media platforms on the misuse of AI, saying all intermediaries must not permit any bias or discrimination or threaten the integrity of the electoral process, and take the government's permission before launching any AI model in the country.

Union Minister of State for Electronics and IT, Rajeev Chandrasekhar, said that it has come to the notice of the IT Ministry that intermediaries or platforms are failing to undertake due-diligence obligations outlined under new IT Rules, 2021.

In addition to the advisory issued in December last year, MeitY has now said that all intermediaries/platforms are advised to also ensure compliance to AI-generated user harm and misinformation, especially deepfakes.

The digital platforms have been asked to comply with new guidelines with immediate effect and to submit an action taken-cum-status Report to the Ministry within 15 days of this advisory.

"In light of the recent Google Gemini AI controversy, the advisory now specifically deals with AI. The digital platforms have to take full accountability and cannot escape by saying that these AI models are in the under-testing phase," said the minister.

The social media intermediaries' must label the AI platforms under testing, take the government's permission and also seek consent of the end-users that their AI models and software are prone to errors so that citizens are aware about their consequences.

According to new guidelines, all intermediaries or platforms to ensure that use of AI models /LLM/Generative AI, software or algorithms "does not permit its users to host, display, upload, modify, publish, transmit, store, update or share any unlawful content as outlined in the Rule 3(1)(b) of the IT Rules or violate any other provision of the IT Act".

The use of under-testing/unreliable AI models and its availability to the users on Indian Internet “must be done so with explicit permission of the government of India and be deployed only after appropriately labeling the possible and inherent fallibility or unreliability of the output generated,” according to the new guidelines.

Further, 'consent popup' mechanism may be used to explicitly inform the users about the possible and inherent fallibility or unreliability of the output generated.

"It is reiterated that non-compliance to the provisions of the IT Act and/or IT Rules would result in potential penal consequences to the intermediaries or platforms or its users when identified, including but not limited to prosecution under IT Act and several other statutes of the criminal code," said the IT Ministry advisory.